The ~Million Variable Universal Optimization Solver

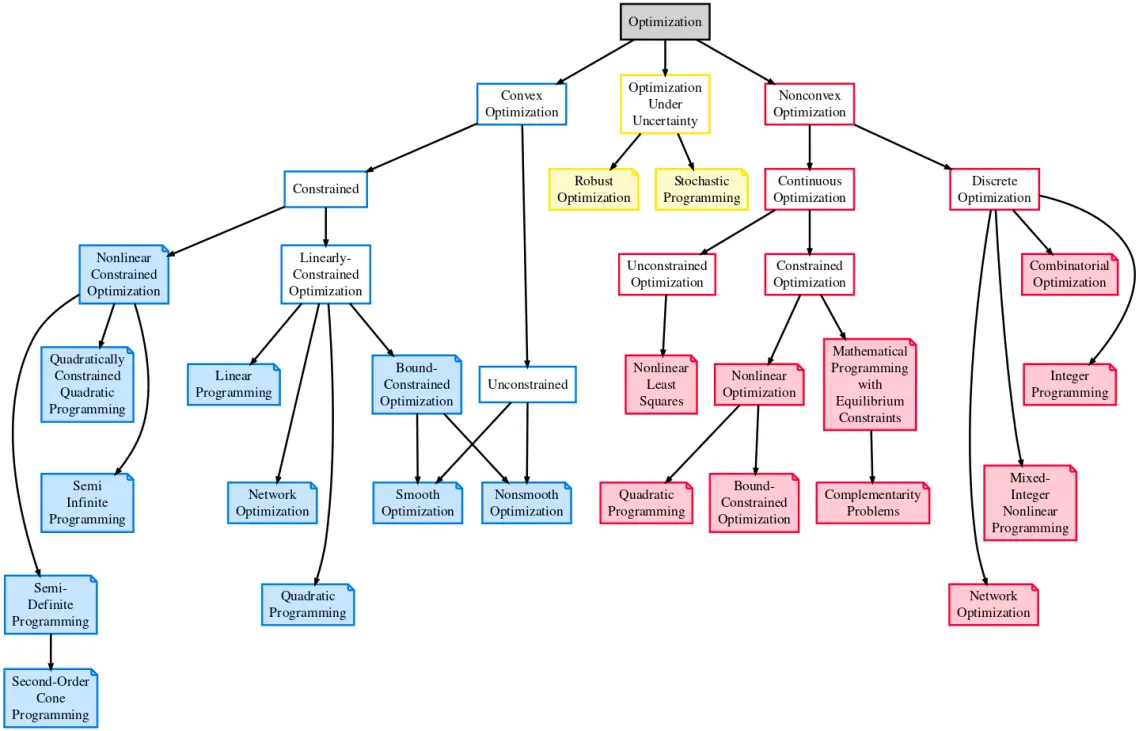

Discrete, Binary, Mixed-Integer

[Un]Constrained

Multi-Objective

Stochastic / Robust

[Non]Linear

Combinatorial

Discrete, Binary, Mixed-Integer

[Un]Constrained

Multi-Objective

Stochastic / Robust

[Non]Linear

Combinatorial

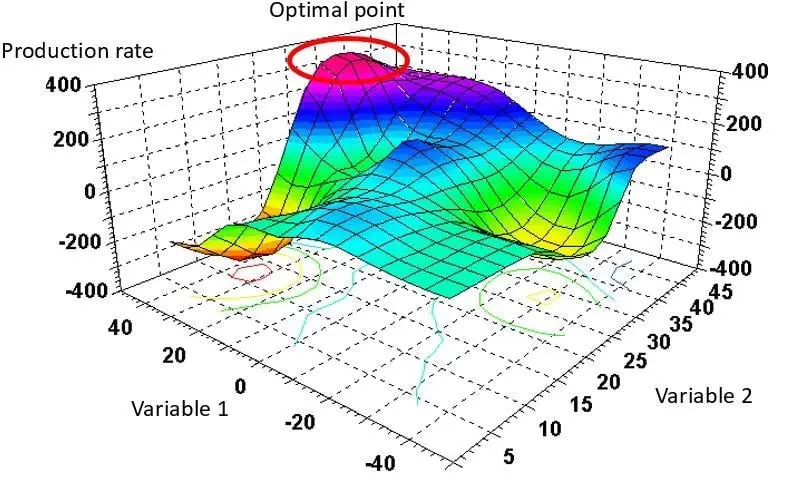

Because 90% of the people in the world use Gradient based methods for solving optimization problems, which is useless for NP-Hard, Non-Linear Problems, which are pretty much everything we want to solve.

Because the other 10% use what they call Gradient "Free" (???) Methods. They dont calculate gradients but rather they use differences. This fancy terminology scheme is also absolutely useless.

Because we built our solvers using Gradient "Agnostic" Methods. And they can solve absolutely any optimization problem anyone can even conceive.

Pretty much any optimization problem you can think of. With arbitrary constraints, objectives and variable types & domains.

This particular solver is completely classical. Though it is often used to help with Variational Quantum Algorithms in the role of the classical optimizer driving the Quantum Circuits.

In the pure software mode it supports lesser. But in the production environment it can. Because the production environment uses HPC clusters with custom built hardware accelerators.

Any Variable Type

Any Domain

Arbitrary Constraints